Efficient Interactions for Reconstructing Complex Buildings via Joint Photometric and Geometric Saliency Segmentation

Apr 11, 2021·

,

,

,

,

,

·

0 min read

,

,

,

,

,

·

0 min read

Bo Xu

Han Hu

Qing Zhu*

Xuming Ge

Yigao Jin

Haojia Yu

Ruofei Zhong

Abstract

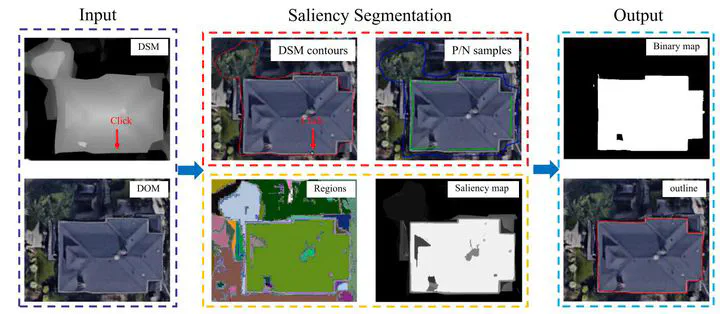

The reconstruction of level-of-detail 2 (LOD-2) buildings has drawn considerable attention over the past two decades. Since completely automatic reconstruction approaches still face many difficulties in industry solutions, efficient and robust interactive modeling tools are of huge demand. This paper proposes an interactive LOD-2 modeling approach that can generate boundary-precise and topology-correct building models efficiently from Digital Surface Model (DSM) and Digital Orthophoto Map (DOM) data. Click-based saliency segmentation is first adopted to extract the outline of building primitives, where both photometric and geometric information are unified. Those outlines decompose the complex-shaped buildings into predefined primitive types. The primitives are then further enriched with other semantic key points, which are essential for inferring the primitives’ shapes and positions. Finally, the models can be built based on the constructive solid geometry and assembly of partial primitives into complex buildings. The order of the primitives can be irrelevant, and the sketches and semantic key points do not necessarily be perfect. Users only need very few and simple operators to generate the integral models, mostly several clicks on a 2D platform. Experimental results on various dataset demonstrate the proposed approach can minimize the amount of interaction while maintaining the ability to deal with various roof types. The integral model quality indices at pixel and object level achieve the precision of 92.3% and 82.5%, accordingly.

Publication

ISPRS Journal of Photogrammetry and Remote Sensing