Real-time Rendering of Large-scale Point Cloud without Structured Searching Index by Random Filling

JIN Yigao, HU Han, DING Yulin, ZHU Qing

Faculty of Geosciences and Engineering, Southwest Jiaotong University, Chengdu, China

Abstract

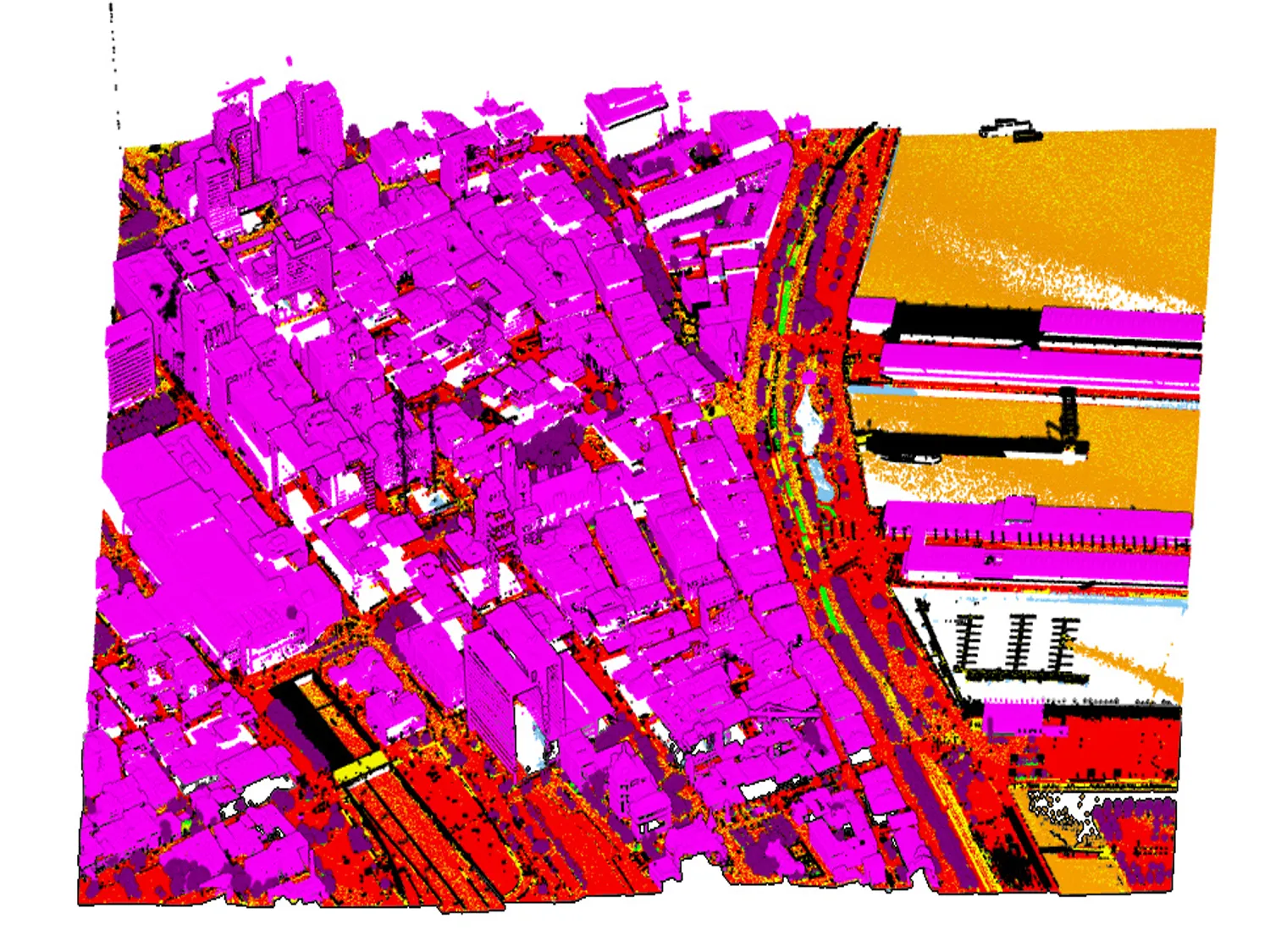

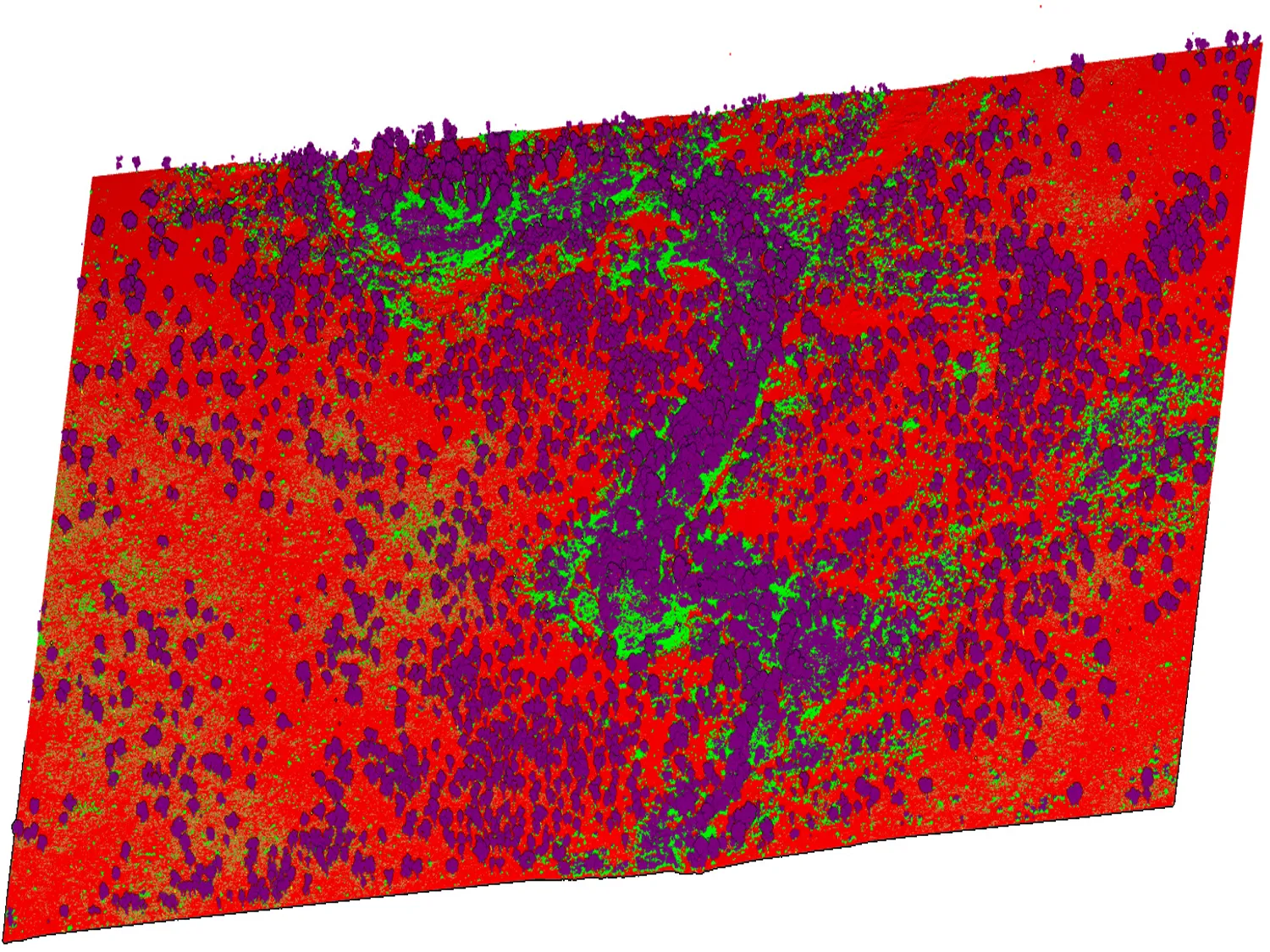

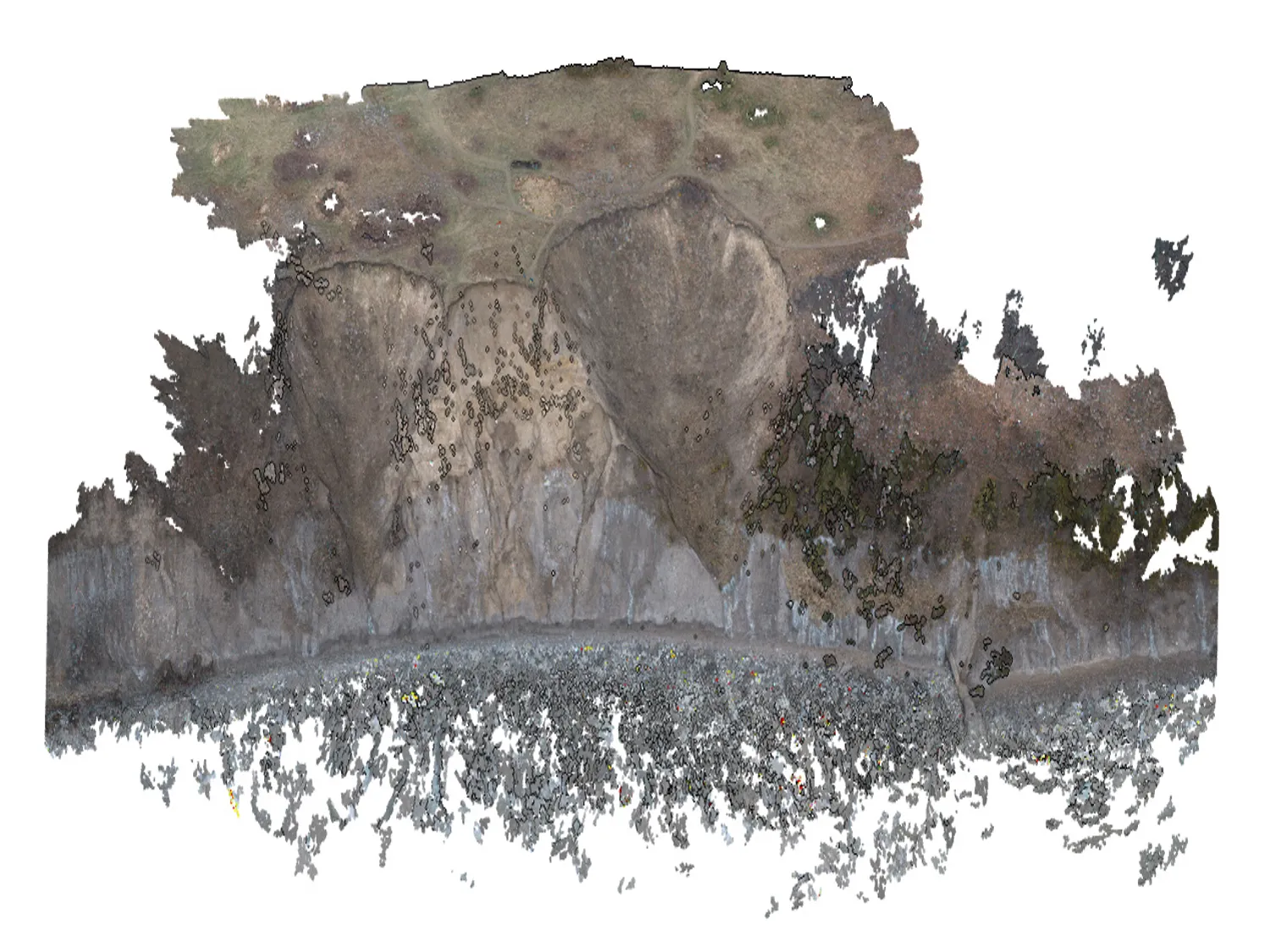

Point clouds, either from laser scanning or photogrammetric pipelines, have now become probably the most popular data sources in the photogrammetry community. Most current approaches for point clouds rendering require a prebuilt searching index for efficiency considerations, such as octree or KD-tree. Unfortunately, the tree structure is static and hard for dynamic updating. This requirement makes directly editing of point clouds in an easy-to-use software solution hard: even standard “undo” and “redo” operations are almost extremely hard, if not impossible, to be implemented. Aiming to solve the above issue, this paper proposes a strategy for large-scale point clouds rendering without a tree structure. The basic idea is very simple: we can reuse the information by reprojecting the render buffer from previous frame and use random sampling to fill holes in current frame. Based on reprojection and filling, the proposed method can do in-core rendering of large-scale point clouds, suitable for the size of main memory in real time. The method does not require to generate multi-resolution spatial structures in advance. On this basis, a real-time rendering system supporting large-scale point clouds was developed, even for notebook with medium- to low-end devices. Experimental evaluations with various datasets collected from both aerial and terrestrial platforms, have revealed that the system can undertake the task of efficient rendering of large-scale unstructured point clouds, and its efficiency is one to two orders of magnitude faster than traditional structured rendering methods.

Point Cloud

Video

The video demonstrations are also available in Bilibili.

Acknowledgements

This work was supported in part by the National Key Research and Development Program of China (Project No. 2018YFC0825803) and by the National Natural Science Foundation of China (Project No. 41631174, 42071355, 41871291). In addition, the authors gratefully acknowledge the provision of the datasets by ISPRS and EuroSDR, which were released in conjunction with the ISPRS Scientific Initiatives 2014 and 2015, led by ISPRS ICWG I/Vb.